CRAM format notes

CRAM files are compressed versions of BAM files containing (aligned) sequencing reads. They represent a further file size reduction for this type of data that is generated at ever increasing…

Life as a Bioinformatics Freelancer: The tools

Some information on what technology I used as a consulting bioinformatics scientist.

Life as a Bioinformatics Freelancer: Finding work

Some ideas for bioinformatics freelancers to find work and advertise themselves to the world.

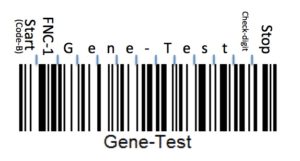

Encoding information with barcodes

Labeling samples with barcodes can make working with large sample numbers easier and less error-prone.

- « Previous Page

- 1

- 2

- 3

- 4

- 5

- …

- 10

- Next Page »